Track all markets on TradingView

BREAKING NEWS

- Arizona indicts Trump allies Giuliani, Meadows, 16 others in 2020 election scheme

- Joe Biden’s re-election campaign won’t stop using TikTok, despite US divestment-or-ban bill

- US trade report keeps China on priority watch list as Blinken visit begins

- Japan’s latest military gamble reflects a changing security landscape

- Gustav Klimt painting auctioned for US$32 million was the subject of a claim of ownership just before its sale

- Xiaomi Mix Fold 4 Tipped to Get Snapdragon 8 Gen 3 SoC, Upgraded Quad Rear Cameras

- Xiaomi 14T, Xiaomi 14T Pro Reference Spotted on HyperOS Code, Unlikely to Launch in India: Report

- Redmi Pad SE Confirmed to Launch in India on April 23; Design, Key Features Teased

- Redmi Pad Pro May Launch Globally Soon; Spotted on FCC Site With HyperOS

- Redmi Pad Pro Indian, Global Variants Allegedly Surface on Google Play Console Database

Latest Stories

Tech & Gadgets

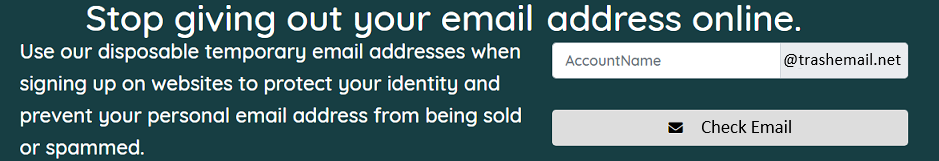

Tired of getting those mysterious password reset emails? Here’s what to do about it

Passwords can definitely be a frustrating part of our lives. Remembering which passwords you used for your dozens of different accounts is…

Read More...

Read More...

Fox News AI Newsletter: AI predicts your politics with single photo

The study used AI to predict people's political orientation based on images of expressionless faces. (American Psychologist)Welcome…

Read More...

Read More...

This off-road teardrop trailer adds luxury camping to the most remote locations

Are you looking for a camper that breaks away from the conventional teardrop design and blends functionality with sleek aesthetics? Meet the…

Read More...

Read More...

The AI camera stripping away privacy in the blink of an eye

It's natural to be leery regarding the ways in which people may use artificial intelligence to cause problems for society in the near…

Read More...

Read More...

11 insider tricks for the tech you use every day

If you're the person skipping updates on your devices … knock that off. You're missing out on important security enhancements—like iOS 17.4,…

Read More...

Read More...

- Advertisement -